Follow @solverworld Tweet this

File compression programs such as Zip, Pkzip, and Winzip can make a file smaller and take up less space on your hard disk. These programs can then reverse the process, or uncompress the file(s), and restore them exactly as they were before. This is called lossless compression. There lossy compression programs, in which the restored files are close but not exactly the same as the original files. This might seem not useful for data files (like databases, text, or spreadsheets), but can be critically important for things like movies or photographic images. We are going to be only discussing lossless compression today.

The very best (in terms of compression ratio) programs use advanced techniques. For many more details and a history of progress, see Matt Mahoney’s Data Compression Explained. Matt Mahoney also runs the The Hutter Prize, a contest (with cash prizes) for the best programs in a specific data compression task. For reference, the current record for compressing the first 100MB of Wikipedia content has a compression ratio of 0.15285.

There is a specific technique that all the top compression programs use that I will explain in this blog post, called Bitwise Compression. We will get to that in a bit, but first we will need to discuss some basic data compression and information theory.

Basic Compression

The basic idea of data compression is that repeated things can be assigned shorter codes than the length they originally were. This means that things that are more likely should be assigned shorter codes. Morse Code tries to be efficient by assigning the most common letter, “E”, the shortest code 1. Let’s look at a toy example, and suppose that we have just 4 symbols in a file and we want to compress the file. Suppose that the 4 symbols are A,B,C, and D and have the following probabilities:

| SYMBOL | PROBABILITY |

|---|---|

| A | 0.75 |

| B | 0.15 |

| C | 0.05 |

| D | 0.05 |

The probabilities can either be thought of as the likelihood of those symbols appearing in future files that we will see, or the fraction of the symbols in a particular file that we are trying to compress. Note that in endeavors like the Hutter Prize, there is a specific file that we are trying to compress and optimize for that file as much as we want; general compression programs do not have that luxury.

Given the above symbols and probabilities, we might ask what is the optimal way to encode them in bits. A particular solution to this program was invented by David A. Huffman, in his 1952 paper, called Huffman Coding. He asked the question: if I assigned bit sequences to each of those symbols, what assignment would produce the shortest output bit sequence on average. Think of this like Morse Code, where instead of each symbol being assigned a certain sequence of dots and dashes, we assign a certain sequence of 0s and 1s.

For example, suppose we just decided to assign A=00, B=01, C=10, and D=11. That is, 2 bits for each symbol. Of course, this encoding method can be reversed, because we just take each pair of bits, and translate back into ABCD as we go. What is the average number of bits used per symbol for this code? By inspection, the answer is 2.0 bits per symbol. Dr. Huffman says we can do better. Using his algorithm, we get the following optimal assignment 2

| SYMBOL | CODE | LENGTH | PROBABILITY | CONTRIBUTION | |

|---|---|---|---|---|---|

| A | 1 | 1 | 0.75 | 0.75 | |

| B | 01 | 2 | 0.15 | 0.30 | |

| C | 001 | 3 | 0.05 | 0.15 | |

| D | 000 | 3 | 0.05 | 0.15 | |

| TOTAL | 1.35 bits/symbol |

This assignment shows that 1.35 bits/symbol is achieved. Huffman says that is this the best we can do by assigning a bit sequence to each symbol. Are there other methods that could do better? Claude Shannon, who invented the entire field of Information Theory with his 2 part blockbuster paper published in the Bell System Technical Journal in 1948, said yes. He said that the information content of a symbol stream could be measured by somethings called Entropy, in units of bits. The formula for computing Entropy is

\[

E=\sum_{k=0}^{N-1}-p_i \log_2 p_i

\]

where \(\log_2\) is the logarithm to the base 2, which, in case you have forgotten can be calculated as

\begin{align}

\log_2 x = \frac{\log x}{\log 2}

\end{align}

where log is the logarithm to your favorite base (such as the more common 10 or e). The units of Entropy are bits/symbol. The number of bits used for any entire file would then be Entropy times the number of symbols in the file.

For our toy ABCD example, the entropy we get is

\[

E=1.15402 \textrm{ bits/symbol}

\]

Now, Shannon did not know the exact practical way to get to an encoding method that could achieve this, but he showed that it was possible. One way to do better would be to encode pairs of symbols into Huffman Codes. That is, we make a table like we did above, but the symbols are now AA, AB, AC, AD, BA, etc. We get the probabilities of these combinations by multiplying the probabilities of the individual symbols together (putting aside conditional probabilities for the time being). This will get us closer to the 1.15402 bits/symbol we are looking for, but not there yet. The reason is that we constrained to using a whole number of bits for each symbol and we end up wasting output bits for each symbol.

Arithmetic Coding

Enter Arithmetic Coding, invented in the 1970s at IBM, and published in the IBM Journal of Research and Development. It basically allows us to out fractional bits for each symbol, and overall, the number of bits used approaches the Entropy we calculated above (the Arithmetic Coding algorithm gets us to within 1 byte of the Entropy in our entire sequence, so let’s call that good enough). There are many descriptions on how AC works, starting with Arithmetic Coding. We are not going to go into the details here.

Context

In any real file, the individual bytes do not show up with independent probabilities. For example, in an English language text file, after the letter “q”, the letter “u” is very likely to show up, far more likely than it’s overall frequency of occurrence in the file. How do we take advantage of this information? It turns out, that using Arithmetic Coding (AC), it very straightforward. AC, at each step, just needs the probabilities of each symbol, and it can output the proper bit sequence to encode those symbols. The decoder just needs the same probabilities provided to it, and it can recover the original symbol sequence. One way that file compression algorithms can take advantage of this information is by building models (or tables) as a file is processed, which try to predict what the next symbol will be. This prediction is done by giving a probability for each symbol. Now, in a technique known as Prediction by Partial Matching (PPM), we look at the previously seen symbols and then predict the next symbol. For example, if we see “sequ” we might give a high probability to the next symbol being “e”. This is done by keeping track of how many times various symbols are seen for each context, or previous symbols. Where it gets difficult is that you want the probabilities to be adaptive, so they can adjust to varying parts of the file. It turns out that we cannot just compute these probabilities by context for the entire file and use those for encoding. They would have to sent along to the decoder. While this would result in extremely good compression ratios, the size of the data (the probability for every context) would be huge, and negate the space savings.

For a bunch of reasons, a variation of this method, Bitwise Compression, was developed. It works basically the same way, but each symbol being encode is a bit from the source file. That is, we only have 2 symbols, “0” and “1”. This makes everything simpler, including the AC algorithm, which now only needs the probability of a 1 to encode each bit. A good question to ask is, are we losing anything by doing this Bitwise Compression? At first glance, it seems like you might be taking up extra space because each bit has to be encoded, rather than entire bytes.

Bitwise Compression

Now, one thing is obvious. If we did not use any context, this would not work. Without any context, each bit is likely to be 50/50, so we would not gain any compression benefit. So what I want to show here is that compressing by bytes is equivalent to compressing by bits, with the context of the byte encoded so far.

Let’s start by comparing two methods of compressing a text file:

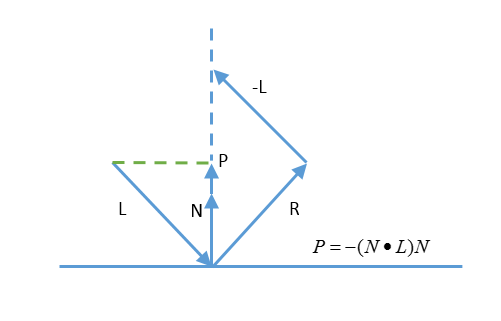

(1) Compress each byte based on its probability of occurrence in the file, with no context. This is easily estimated by simply computing the probability of each byte (based on the number of times it occurs in the file), then computing the entropy of those probabilities.

(2) Compress each byte, one bit at a time, where the context of each bit prediction is the previously seen byte. That is, for the first bit prediction, the context is nothing. That is, the first bit will be predicted based on the probability of the first bit of each byte being 1. The second bit will be predicted based on the previous context being ‘0’ or ‘1’, depending on what the first bit was. A simple way to handle the bit context in Python is to make a context string, which is a ‘1’ followed by the actual bits seen so far. This distinguishes 3 zeros seen from 1 zeros seen: 1000 (0x8) means 3 zeros seen, while 10 (0x3) means a single 0 seen, and 1 (0x1) means nothing seen yet (the first bit of a byte). In the accompanying code, this is called the bitcontext.

You can follow along with the python code in the related gitlab account, which you can get by doing

git clone https://gitlab.com/dgrunberg/bitwise-compress.git

If you have Python 3.5 or later, you should be able to run this code, like this:

python study-bits.py --meg 1 --input enwik8

Where enwik8 is the 100MB of Wikipedia data you can get from the Hutter Prize site. This is perform the analysis on the first 1000000 bytes of enwik8. The Estimated compression bits it puts out is computed by calculating the entropy of the probabilities generated as inputs to the AC.

The results are

| Method – no previous bytes context + bitcontext | Compression Ratio |

|---|---|

| Entropy by byte: 5.0589 bits/byte | 0.6324 |

| Encode by bit – Estimated bits out by calculating entropy | 0.6324 |

| Encode by bit – Actual bits output | 0.6324 |

So you can see, for this simple example file, that encoding by bit with a single byte bitcontext gives the same result as encoding based on byte probabilities.

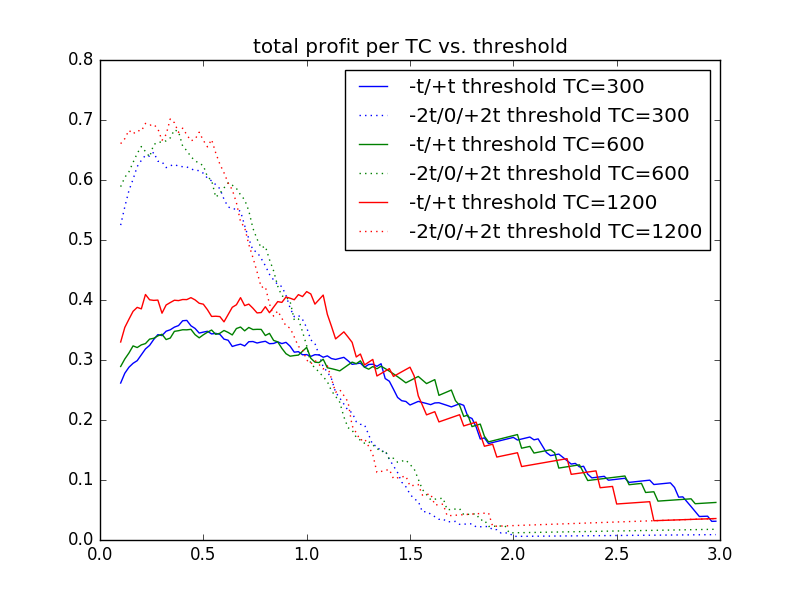

Now, for a preview of how context can help with encoding, let us consider using the single previous byte as context. The results are:

| Method – single previous byte as context + bitcontext | Compression Ratio |

|---|---|

| Encode by bit – Estimated bits out by calculating entropy | 0.4793 |

| Encode by bit – Actual bits output | 0.4794 |

So we went from compression ratio of 0.6324 to 0.4794 by using a single previous byte as context. If you follow the history of compression programs from Matt Mahoney’s excellent site, you will see that to get from here to the current record holder ratio of about 0.15, a number of additional things get added:

- An adaptive probability model is used, where the count of occurrences of each context is encoded into a single byte (called a bit history). This gets updated each time a context is encountered through a table lookup

- Each count is mapped to a probability through a single table for the entire context; the table gets updated as each bit is revealed.

- Many different models are used, including various numbers of previous bytes and some unigram word models

- These models are combined linearly (called the mixer), with the weights adjusted adaptively

- This mixed probability is then run through a SSE (Secondary Symbol Estimation), which is another adaptively updated table that corrects this probability. Sometimes 2 or more SSE stages are used.

- More than 1 set of weights are used, where the particular weight to be used is chosen based on some other context characteristics.

- A preprocessor and dictionary is used, which replaces many of the words in the file with various dictionary entries and escape codes.

-THE END

Notes:

- E is just one single dot. The second most common letter, T, is a single dash. ↩

- One minor point I glossed over: since the codes in general are different lengths, we have to be able to decode them without any specific indication of where each code ends. That is, we need to be able to parse out the individual bit sequences from the overall stream. The property of the chosen codes that ensures this will happen is called the prefix property. This means that no code can be a prefix (first part) of any other code. Huffman Codes have this property. ↩